|

By re-creating the superblock, you should have a fully usable system. Note that your array is shown in the output, including the mount point: nvme0n1 259:0 0 2G 0 diskĬompress `/var/log/messages` Using `tar` and Place It in the `/mnt/raid1` DirectoryĬompress the messages file, and place it in the newly created raid1 directory: sudo tar -czvf /mnt/raid1/messages.tar. With a three-disk RAID-1 array, there are more possibilities, such as using two. Verify the RAID size is correct and the mount displays: lsblk Check if data is accessible from degraded RAID 5 array and backup data immediately. The controller needs to configure the drives to its liking which also usually involves writing zeros over the entire array, less the space it has written its configuration data to. 1.Do not run CHKDSK scan on a degraded, failed, or broken RAID array.

The storage capacity of the level 1 array is equal to the capacity of the smallest mirrored hard disk in a Hardware RAID or the smallest mirrored partition in a Software RAID hence less space efficient.

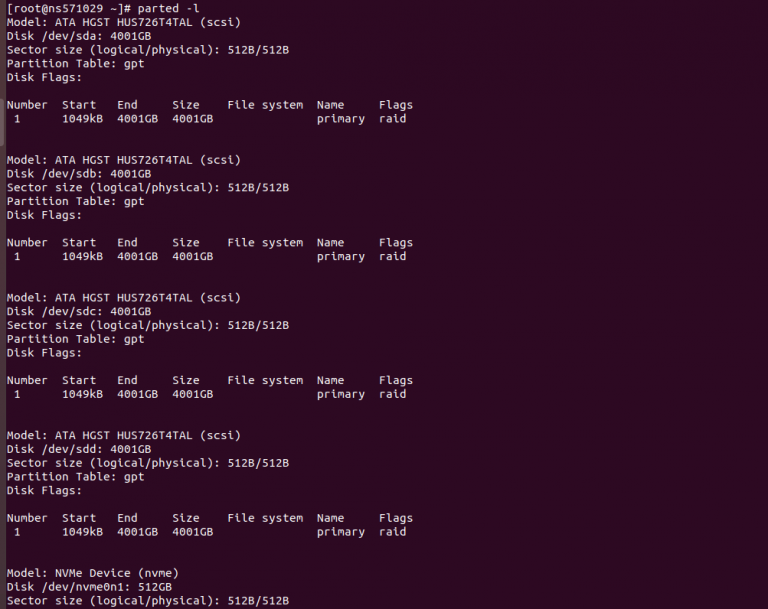

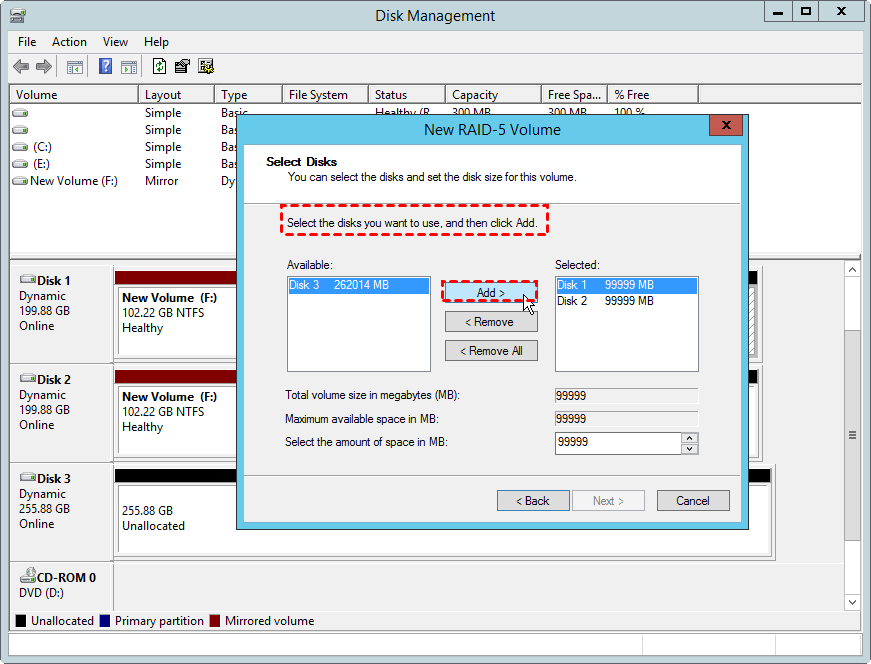

l1 (l as in 'level'): indicates that this will be a RAID-1 array. Now the array is complete, check the status: sudo mdadm -detail /dev/md0Ĭreate an XFS Filesystem on the Array and Mount It to `/mnt/raid1`Ĭreate the filesystem: sudo mkfs.xfs /dev/md0Ĭreate a directory and then mount the RAID volume into the directory: sudo mkdir /mnt/raid1 As far as I know, the act of creating a RAID array destroys all data on the drives used in the array during the initialization process. When you add another disk to the array, the data on existing disk will be copied to the new disk as well. Once you are done with creating the primary partition on each drive, use the following command to create a RAID-1 array: -Cv: creates an array and produce verbose output. Watch it being created: sudo watch cat /proc/mdstat Verify that mdadm is installed: rpm -q mdadmĬreate the RAID 1 using two of the drives: sudo mdadm -create /dev/md0 -level=1 -raid-devices=2 /dev/nvme0n1p1 /dev/nvme1n1p1 dev/md2 has a size of 40GB I want to shrink it to 30GB. Verify mdadm Is Installed and Create a RAID 1 (`/dev/md0`) from Two of the Drives Then activate your RAID arrays: cp /etc/mdadm/nf /etc/mdadm/nforig mdadm -examine -scan > /etc/mdadm/nf mdadm -A -scan Run e2fsck -f /dev/md2 to check the file system. Prompt the kernel to reread the partition table for the drive: sudo partprobe /dev/nvme0n1 Your output should match the below (the drives you’ll be using later are nvme0n1, nvme1n1, and nvme2n1): NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTĬreate partitions on the drives, performing the following for each drive: sudo fdisk /dev/nvme0n1 Get the names of the drives with no partitions lsblk You to let the /boot filesystem be inside any RAID system without theĪlthough it isn't your question, it may be useful to consult RAID Boot for more information on using initramfs to start a system booting from md volumes.Successfully complete this lab by achieving the following learning objectives: Provision the Disks with a Primary Partition So They Can Be Added to the RAID rray With more recent bootloaders it is possible to load the MD support asĪ kernel module through the initramfs mechanism, this approach allows

This will result in aĬatch-up, but /boot filesystems are usually small. Remounted as md and the second disk added to it. In the latter case the system will boot by treating the RAID1 deviceĪs a normal filesystem, and once the system is running it can be In order to circumvent this problem a /bootįilesystem must be used either without md support, or else with RAID1. Present if the boot loader is either (e)LiLo or GRUB legacy. Since support for MD is found in the kernel, there is an issue with It is generally true that you need a separate /boot unless you want to boot the system on a single of the two RAID1 disks and then remount as md after the system is running or set up an appropriate initramfs.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed